Kaal - Rate Limiter

A time-aware API rate limiter built with the Token Bucket algorithm. Each client IP gets its own bucket; requests are allowed only if tokens are available.

Timeline

2026

Role

Backend Developer

Status

completed

Technology Stack

Java 21Core implementation + concurrency-friendly runtime

Core implementation + concurrency-friendly runtime

Spring Boot 3.4.5Service framework

Service framework

Redis 8.0Distributed token storage per client IP

Distributed token storage per client IP

Gradle 8.10Build + dependency management

Build + dependency management

Key Challenges

- • Designing time-based token refill logic (continuous refill by time)

- • Keeping per-client buckets consistent using Redis (distributed state)

- • Integrating rate limiting cleanly at the gateway layer

- • Returning correct throttling response (HTTP 429) with predictable behavior

Key Learnings

- • Token Bucket fundamentals (capacity, refill rate, burst handling)

- • Using Redis for shared rate-limit state across instances

- • Gateway-first architecture for cross-cutting concerns like rate limiting

- • Building deterministic request-control systems for microservices

Overview

A distributed API rate limiter built using the Token Bucket algorithm.

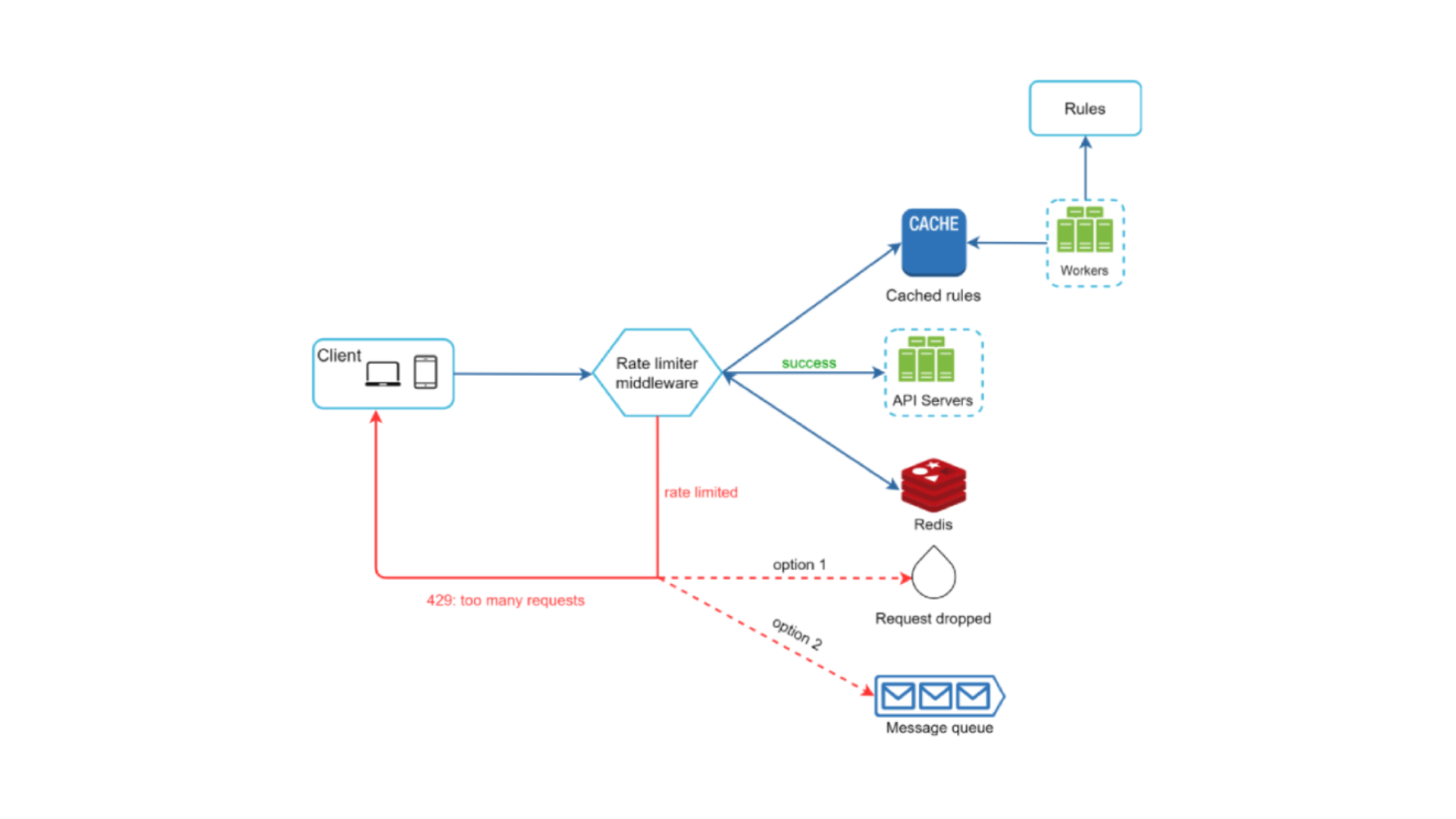

Kaal controls request flow at the gateway level and protects backend services from abuse in a scalable and predictable way.

What It Can Do

- Per-IP Rate Limiting: Each client IP gets its own token bucket

- Time-based Token Refill: Tokens regenerate automatically based on time

- Gateway-level Enforcement: Requests are filtered before hitting backend services

- Distributed Support with Redis: Consistent limits across multiple instances

- HTTP 429 Handling: Clean rejection when rate limits are exceeded

Why I Built This

To understand how real production systems protect APIs from traffic spikes and misuse.

This project explores rate-limiting algorithms, distributed state management with Redis, gateway-first design patterns, and core system design principles such as scalability, fault tolerance, and consistency used in modern backend architectures.